Context and Project Goals

The STREAM project (SponTaneous REArrangement of ice Motion in ice sheets) aims to investigate polar ice dynamics through the development of an innovative modelling system of ice flow, which was possible due to EuroHPC JU awarded computing resources, on the supercomputer LUMI.

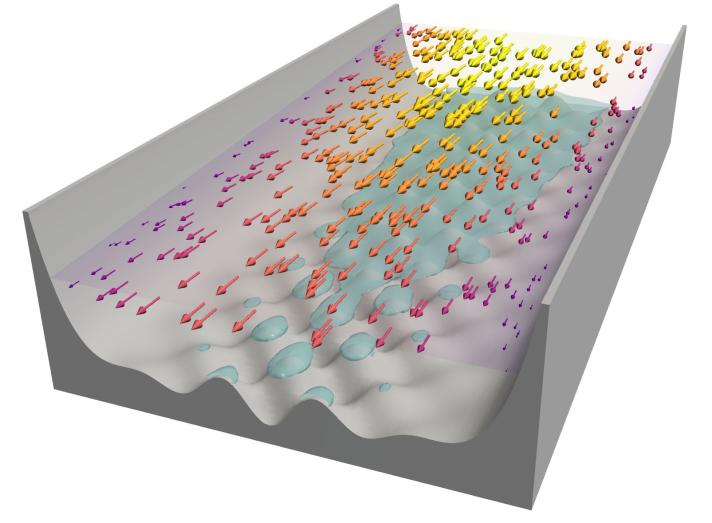

This computational simulation will support research which seeks to better comprehend the intricate mechanisms governing the movement of massive ice sheets in regions like Antarctica and Greenland. This phenomenon is crucial for predicting how fast the glaciers are moving and developing more accurate sea-level projections. Indeed, the faster glaciers move, the higher the flux of ice that gets shed into the ocean and the faster these ice sheets melt, contributing to sea-level rise, one of the most important effects of climate change.

Societal Impacts

By aiming for a more accurate portrayal of ice dynamics, STREAM seeks to simulate scenarios with unprecedented precision. Accurate predictions of sea-level rise are crucial for coastal populations and the global economy, particularly in the face of climate change. By enhancing the precision of these predictions, the project aims to offer valuable insights for mitigation strategies and societal adaptation for a safer future.

Computational Methods

The STREAM research team believes that new techniques in high-performance extreme-scale GPU computing will revolutionise ice flow modelling in the future and provide more accurate predictions.

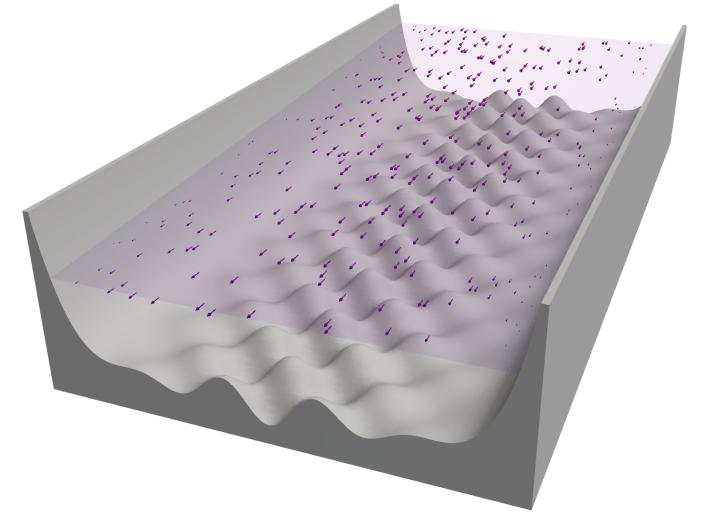

The team tackled the complexities of ice flow dynamics by employing a computational approach that involves solving partial differential equations (PDEs) on GPUs.

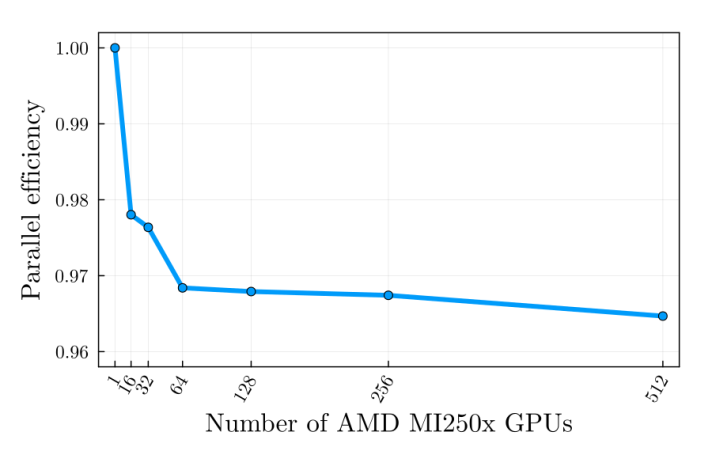

In order to manage the computational demands posed by the resolution requirements and the regional-scale ice flow simulations, the team adopted a distributed memory parallelisation strategy. This involved simultaneous computations across multiple GPUs, each handling specific sub-domains necessary to comprehensively address the entire problem area. To mitigate potential slowdowns in computation speed when scaling on multiple GPUs, the team also ran in parallel local domain computations and exchanged the boundary information among all distributed processes, ensuring the most complete scalability of the implementation.

Research Team

Leading this scientific project research is Dr. Ludovic Räss, a computational geoscientist from ETH Zurich, accompanied by Dr. Ivan Utkin, an applied mathematician at ETH Zurich and Julian Samaroo, a research software engineer from MIT.

Dr. Räss has over a decade of experience in GPU-accelerated research software and leads the computational aspect of the projects. Dr. Utkin is an expert in fluid dynamics and numerical methods. He worked to bridge physics modeling and software engineering. Mr. Samaroo, a key contributor to the development of the Julia programming language used in this project, ensured backend-agnostic codes compatible with diverse GPUs.

Project Timeline

The timeline of the STREAM project included delivering systematic parameter investigation on synthetic bedrock geometries; regional-scale simulations of flow localisation in Greenland, and ice-sheet scale simulations, all in different phases. At the start of computational resource allocation, the team performed benchmarking and test simulations to verify the software's accuracy, scalability and performance. The largest simulations were planned for the last part of the project in order to maximise the chances of a successful outcome.

Employment and Future Utilisation of EuroHPC Resources

As the project progressed, continued use of EuroHPC resources such as the LUMI supercomputer was integral to the outcome and has been instrumental, enabling large-scale simulations that would be impossible on smaller machines.

In the future, the team would like to apply for additional allocations related to diverse multi-physics Earth sciences projects. EuroHPC's support is essential to their ongoing and future research endeavors, ensuring cutting-edge computational resources for their scientific pursuits.

This methodology is unfolded on a regular grid, emphasising very high spatial resolution through finite-differences. While the technique may seem straightforward, it takes advantage of the inherent strengths of GPUs in executing specific operations on regular grids, ensuring optimal memory access patterns and achieving close-to-ideal performance.